Universities, schools, and L&D organizations everywhere are facing a pressing need to quickly transition face-to-face live group training efforts to virtual and on-line programming.

Inevitably, especially as we rush to make this transition, some of what we do will work, and some of it will not. We have to be able to figure out, quickly and on the fly, what is working and what is not, and make ongoing adjustments. This is the job of evaluation. To do evaluation is not a choice, we have to, but we can choose to be smart about it. We cannot afford to spend time on measures that do not yield worthwhile and actionable data. We need valid actionability: barking up the trees that are most right for overall organization success – now.

Forget the questions of “business impact” or in Kirkpatrick terminology, Level 4, ROE, and other sorts of evaluation pursuits that will waste our precious time. What is most important is doing more of the things that are helping our L&D participants make use of the learning we’re providing, and what is getting in the way if they are struggling to make good use what we’re providing.

My advice: Leave the “business outcome” metrics to the people who own them, and instead focus most heavily on getting the behaviour changes that, if enacted, will drive the metrics in the right direction. In this sense, the Kirkpatrick Level Three evaluation is most important. And, do not wait for the standard 3-6 months to investigate this. If people aren’t making changes in their behaviour right away, something is wrong and needs to be fixed.

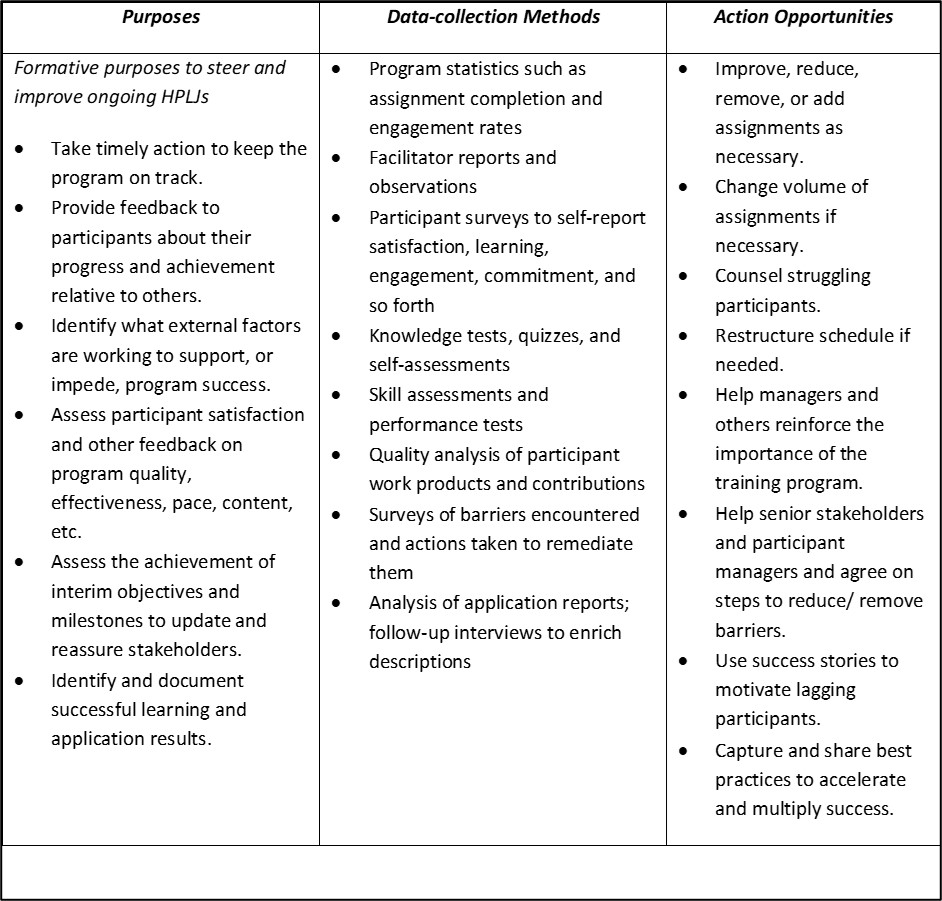

Here are the sorts of evaluation purposes and methods that will yield the greatest fruit as new virtual programs are launched, along with some notes about what kinds of useful actions these data can help drive. None of these requires sophisticated data methods or analyses – this is not rocket science.